Project flow#

LaminDB allows tracking data lineage on the entire project level.

Here, we walk through exemplified app uploads, pipelines & notebooks following Schmidt et al., 2022.

A CRISPR screen reading out a phenotypic endpoint on T cells is paired with scRNA-seq to generate insights into IFN-γ production.

These insights get linked back to the original data through the steps taken in the project to provide context for interpretation & future decision making.

More specifically: Why should I care about data flow?

Data flow tracks data sources & transformations to trace biological insights, verify experimental outcomes, meet regulatory standards, increase the robustness of research and optimize the feedback loop of team-wide learning iterations.

While tracking data flow is easier when it’s governed by deterministic pipelines, it becomes hard when it’s governed by interactive human-driven analyses.

LaminDB interfaces workflow mangers for the former and embraces the latter.

Setup#

Init a test instance:

!lamin init --storage ./mydata

Show code cell output

💡 connected lamindb: testuser1/mydata

Import lamindb:

import lamindb as ln

from IPython.display import Image, display

💡 connected lamindb: testuser1/mydata

Steps#

In the following, we walk through exemplified steps covering different types of transforms (Transform).

Note

The full notebooks are in this repository.

App upload of phenotypic data  #

#

Register data through app upload from wetlab by testuser1:

# This function mimics the upload of artifacts via the UI

# In reality, you simply drag and drop files into the UI

def run_upload_crispra_result_app():

ln.setup.login("testuser1")

transform = ln.Transform(name="Upload GWS CRISPRa result", type="app")

ln.track(transform=transform)

output_path = ln.core.datasets.schmidt22_crispra_gws_IFNG(ln.settings.storage)

output_file = ln.Artifact(

output_path, description="Raw data of schmidt22 crispra GWS"

)

output_file.save()

run_upload_crispra_result_app()

Show code cell output

💡 saved: Transform(uid='bl05Pd2Sv77D2Hei', name='Upload GWS CRISPRa result', type='app', updated_at=2024-04-18 09:10:10 UTC, created_by_id=1)

💡 saved: Run(uid='gdFhXZbPaA6rc1EWw77f', transform_id=1, created_by_id=1)

Hit identification in notebook  #

#

Access, transform & register data in drylab by testuser2:

def run_hit_identification_notebook():

# log in as another user

ln.setup.login("testuser2")

# create a new transform to mimic a new notebook (in reality you just run ln.track() in a notebook)

transform = ln.Transform(name="GWS CRIPSRa analysis", type="notebook")

ln.track(transform=transform)

# access the upload artifact

input_file = ln.Artifact.filter(key="schmidt22-crispra-gws-IFNG.csv").one()

# identify hits

input_df = input_file.load().set_index("id")

output_df = input_df[input_df["pos|fdr"] < 0.01].copy()

# register hits in output artifact

ln.Artifact(output_df, description="hits from schmidt22 crispra GWS").save()

run_hit_identification_notebook()

Show code cell output

💡 saved: Transform(uid='HjPqSbaXb2nuwmIx', name='GWS CRIPSRa analysis', type='notebook', updated_at=2024-04-18 09:10:11 UTC, created_by_id=1)

💡 saved: Run(uid='nhXnn10zPmATxAhzsDYv', transform_id=2, created_by_id=1)

❗ data is a DataFrame, please use .from_df()

Inspect data flow:

artifact = ln.Artifact.filter(description="hits from schmidt22 crispra GWS").one()

artifact.view_lineage()

Sequencer upload  #

#

Upload files from sequencer:

def run_upload_from_sequencer_pipeline():

ln.setup.login("testuser1")

# create a pipeline transform

ln.track(transform=ln.Transform(name="Chromium 10x upload", type="pipeline"))

# register output files of the sequencer

upload_dir = ln.core.datasets.dir_scrnaseq_cellranger(

"perturbseq", basedir=ln.settings.storage, output_only=False

)

ln.Artifact(upload_dir.parent / "fastq/perturbseq_R1_001.fastq.gz").save()

ln.Artifact(upload_dir.parent / "fastq/perturbseq_R2_001.fastq.gz").save()

run_upload_from_sequencer_pipeline()

Show code cell output

💡 saved: Transform(uid='NIIKiTU4C6xElS88', name='Chromium 10x upload', type='pipeline', updated_at=2024-04-18 09:10:12 UTC, created_by_id=1)

💡 saved: Run(uid='051FEvOKRcbNOkYzWnhp', transform_id=3, created_by_id=1)

scRNA-seq bioinformatics pipeline  #

#

Process uploaded files using a script or workflow manager: Pipelines and obtain 3 output files in a directory filtered_feature_bc_matrix/:

def run_scrna_analysis_pipeline():

ln.setup.login("testuser2")

transform = ln.Transform(name="Cell Ranger", version="7.2.0", type="pipeline")

ln.track(transform=transform)

# access uploaded files as inputs for the pipeline

input_artifacts = ln.Artifact.filter(key__startswith="fastq/perturbseq").all()

input_paths = [artifact.cache() for artifact in input_artifacts]

# register output files

output_artifacts = ln.Artifact.from_dir(

"./mydata/perturbseq/filtered_feature_bc_matrix/"

)

ln.save(output_artifacts)

# Post-process these 3 files

transform = ln.Transform(

name="Postprocess Cell Ranger", version="2.0", type="pipeline"

)

ln.track(transform=transform)

input_artifacts = [f.cache() for f in output_artifacts]

output_path = ln.core.datasets.schmidt22_perturbseq(basedir=ln.settings.storage)

output_file = ln.Artifact(output_path, description="perturbseq counts")

output_file.save()

run_scrna_analysis_pipeline()

Show code cell output

💡 saved: Transform(uid='gnGZfJnBNoEzDHDr', name='Cell Ranger', version='7.2.0', type='pipeline', updated_at=2024-04-18 09:10:13 UTC, created_by_id=1)

💡 saved: Run(uid='RJOudKgK3yAokOMo4Tbf', transform_id=4, created_by_id=1)

❗ this creates one artifact per file in the directory - you might simply call ln.Artifact(dir) to get one artifact for the entire directory

💡 saved: Transform(uid='c6u4TdrKGPtq6VUe', name='Postprocess Cell Ranger', version='2.0', type='pipeline', updated_at=2024-04-18 09:10:14 UTC, created_by_id=1)

💡 saved: Run(uid='aYBpI3B1w3Z5tPGExLj1', transform_id=5, created_by_id=1)

Inspect data flow:

output_file = ln.Artifact.filter(description="perturbseq counts").one()

output_file.view_lineage()

Integrate scRNA-seq & phenotypic data  #

#

Integrate data in a notebook:

def run_integrated_analysis_notebook():

import scanpy as sc

# create a new transform to mimic a new notebook (in reality you just run ln.track() in a notebook)

transform = ln.Transform(

name="Perform single cell analysis, integrate with CRISPRa screen",

type="notebook",

)

ln.track(transform=transform)

# access the output files of bfx pipeline and previous analysis

file_ps = ln.Artifact.filter(description__icontains="perturbseq").one()

adata = file_ps.load()

file_hits = ln.Artifact.filter(description="hits from schmidt22 crispra GWS").one()

screen_hits = file_hits.load()

# perform analysis and register output plot files

sc.tl.score_genes(adata, adata.var_names.intersection(screen_hits.index).tolist())

filesuffix = "_fig1_score-wgs-hits.png"

sc.pl.umap(adata, color="score", show=False, save=filesuffix)

filepath = f"figures/umap{filesuffix}"

artifact = ln.Artifact(filepath, key=filepath)

artifact.save()

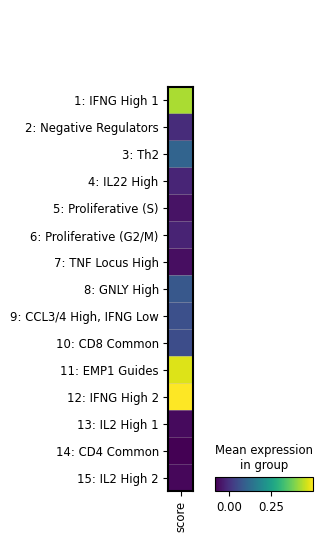

filesuffix = "fig2_score-wgs-hits-per-cluster.png"

sc.pl.matrixplot(

adata, groupby="cluster_name", var_names=["score"], show=False, save=filesuffix

)

filepath = f"figures/matrixplot_{filesuffix}"

artifact = ln.Artifact(filepath, key=filepath)

artifact.save()

run_integrated_analysis_notebook()

Show code cell output

💡 saved: Transform(uid='5TBciFk5ofchaIc0', name='Perform single cell analysis, integrate with CRISPRa screen', type='notebook', updated_at=2024-04-18 09:10:15 UTC, created_by_id=1)

💡 saved: Run(uid='SWx4YnHEWw3WBpjfrOfq', transform_id=6, created_by_id=1)

WARNING: saving figure to file figures/umap_fig1_score-wgs-hits.png

WARNING: saving figure to file figures/matrixplot_fig2_score-wgs-hits-per-cluster.png

Review results#

Let’s load one of the plots:

# track the current notebook as transform

ln.settings.transform.stem_uid = "1LCd8kco9lZU"

ln.settings.transform.version = "0"

ln.track()

💡 notebook imports: ipython==8.23.0 lamindb==0.70.0 scanpy==1.10.1

💡 saved: Transform(uid='1LCd8kco9lZU6K79', name='Project flow', key='project-flow', version='0', type='notebook', updated_at=2024-04-18 09:10:16 UTC, created_by_id=1)

💡 saved: Run(uid='0U6vvjnfFmBmMg2r602J', transform_id=7, created_by_id=1)

artifact = ln.Artifact.filter(key__contains="figures/matrixplot").one()

artifact.cache()

Show code cell output

PosixUPath('/home/runner/work/lamin-usecases/lamin-usecases/docs/mydata/.lamindb/3yx9wJh4Z54xFtAw0ydP.png')

display(Image(filename=artifact.path))

We see that the image artifact is tracked as an input of the current notebook. The input is highlighted, the notebook follows at the bottom:

artifact.view_lineage()

Alternatively, we can also look at the sequence of transforms:

transform = ln.Transform.search("Bird's eye view", return_queryset=True).first()

transform.parents.df()

| uid | name | key | version | description | type | latest_report_id | source_code_id | reference | reference_type | created_at | updated_at | created_by_id | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| id | |||||||||||||

| 4 | gnGZfJnBNoEzDHDr | Cell Ranger | None | 7.2.0 | None | pipeline | None | None | None | None | 2024-04-18 09:10:13.580309+00:00 | 2024-04-18 09:10:13.580329+00:00 | 1 |

transform.view_parents()

Understand runs#

We tracked pipeline and notebook runs through run_context, which stores a Transform and a Run record as a global context.

Artifact objects are the inputs and outputs of runs.

What if I don’t want a global context?

Sometimes, we don’t want to create a global run context but manually pass a run when creating an artifact:

run = ln.Run(transform=transform)

ln.Artifact(filepath, run=run)

When does an artifact appear as a run input?

When accessing an artifact via stage(), load() or backed(), two things happen:

The current run gets added to

artifact.input_ofThe transform of that artifact gets added as a parent of the current transform

You can then switch off auto-tracking of run inputs if you set ln.settings.track_run_inputs = False: Can I disable tracking run inputs?

You can also track run inputs on a case by case basis via is_run_input=True, e.g., here:

artifact.load(is_run_input=True)

Query by provenance#

We can query or search for the notebook that created the artifact:

transform = ln.Transform.search("GWS CRIPSRa analysis", return_queryset=True).first()

And then find all the artifacts created by that notebook:

ln.Artifact.filter(transform=transform).df()

| uid | storage_id | key | suffix | accessor | description | version | size | hash | hash_type | n_objects | n_observations | transform_id | run_id | visibility | key_is_virtual | created_at | updated_at | created_by_id | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| id | |||||||||||||||||||

| 2 | 3kC0qDrem4nTEhoaNhgj | 1 | None | .parquet | DataFrame | hits from schmidt22 crispra GWS | None | 18368 | R18ae1iumXFrQmlf6ZRRGg | md5 | None | None | 2 | 2 | 1 | True | 2024-04-18 09:10:12.353494+00:00 | 2024-04-18 09:10:12.353519+00:00 | 1 |

Which transform ingested a given artifact?

artifact = ln.Artifact.filter().first()

artifact.transform

Transform(uid='bl05Pd2Sv77D2Hei', name='Upload GWS CRISPRa result', type='app', updated_at=2024-04-18 09:10:10 UTC, created_by_id=1)

And which user?

artifact.created_by

User(uid='DzTjkKse', handle='testuser1', name='Test User1', updated_at=2024-04-18 09:10:12 UTC)

Which transforms were created by a given user?

users = ln.User.lookup()

ln.Transform.filter(created_by=users.testuser2).df()

| uid | name | key | version | description | type | reference | reference_type | created_at | updated_at | latest_report_id | source_code_id | created_by_id | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| id |

Which notebooks were created by a given user?

ln.Transform.filter(created_by=users.testuser2, type="notebook").df()

| uid | name | key | version | description | type | reference | reference_type | created_at | updated_at | latest_report_id | source_code_id | created_by_id | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| id |

We can also view all recent additions to the entire database:

ln.view()

Show code cell output

Artifact

| uid | storage_id | key | suffix | accessor | description | version | size | hash | hash_type | n_objects | n_observations | transform_id | run_id | visibility | key_is_virtual | created_at | updated_at | created_by_id | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| id | |||||||||||||||||||

| 10 | 3yx9wJh4Z54xFtAw0ydP | 1 | figures/matrixplot_fig2_score-wgs-hits-per-clu... | .png | None | None | None | 28814 | 8zXF_cVwaZnfhmrLbt_0kA | md5 | None | None | 6 | 6 | 1 | True | 2024-04-18 09:10:16.691734+00:00 | 2024-04-18 09:10:16.691759+00:00 | 1 |

| 9 | YIZu07NFi5eRhKUICOAx | 1 | figures/umap_fig1_score-wgs-hits.png | .png | None | None | None | 118999 | DCFDLUMF-UohaBvkThn0mA | md5 | None | None | 6 | 6 | 1 | True | 2024-04-18 09:10:16.379306+00:00 | 2024-04-18 09:10:16.379330+00:00 | 1 |

| 8 | sWJ3tC9xP14qzI6FUbgu | 1 | schmidt22_perturbseq.h5ad | .h5ad | None | perturbseq counts | None | 20659936 | la7EvqEUMDlug9-rpw-udA | md5 | None | None | 5 | 5 | 1 | False | 2024-04-18 09:10:14.875597+00:00 | 2024-04-18 09:10:14.875628+00:00 | 1 |

| 7 | iQHJ7vzIvZVcEL8ST8Fk | 1 | perturbseq/filtered_feature_bc_matrix/matrix.m... | .mtx.gz | None | None | None | 6 | XJSUH1BiznMexjGxkX9DkA | md5 | None | None | 4 | 4 | 1 | False | 2024-04-18 09:10:14.030615+00:00 | 2024-04-18 09:10:14.030632+00:00 | 1 |

| 6 | KDYfh1bw0KyrBMtRq8YC | 1 | perturbseq/filtered_feature_bc_matrix/features... | .tsv.gz | None | None | None | 6 | CecicQgYL6nJnUHnQlQ23Q | md5 | None | None | 4 | 4 | 1 | False | 2024-04-18 09:10:14.030018+00:00 | 2024-04-18 09:10:14.030037+00:00 | 1 |

| 5 | gambevyYYQaI42BzdGgs | 1 | perturbseq/filtered_feature_bc_matrix/barcodes... | .tsv.gz | None | None | None | 6 | Bo6xEIToMiCqgQ1keksA4g | md5 | None | None | 4 | 4 | 1 | False | 2024-04-18 09:10:14.029278+00:00 | 2024-04-18 09:10:14.029297+00:00 | 1 |

| 4 | Z3vyUPeWP1boWBEpo094 | 1 | fastq/perturbseq_R2_001.fastq.gz | .fastq.gz | None | None | None | 6 | YJiVqotQ5F8WPwQAkErMdw | md5 | None | None | 3 | 3 | 1 | False | 2024-04-18 09:10:13.240028+00:00 | 2024-04-18 09:10:13.240047+00:00 | 1 |

Run

| uid | transform_id | started_at | finished_at | created_by_id | json | report_id | environment_id | is_consecutive | reference | reference_type | created_at | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| id | ||||||||||||

| 1 | gdFhXZbPaA6rc1EWw77f | 1 | 2024-04-18 09:10:10.866267+00:00 | None | 1 | None | None | None | True | None | None | 2024-04-18 09:10:10.866452+00:00 |

| 2 | nhXnn10zPmATxAhzsDYv | 2 | 2024-04-18 09:10:11.881720+00:00 | None | 1 | None | None | None | True | None | None | 2024-04-18 09:10:11.881818+00:00 |

| 3 | 051FEvOKRcbNOkYzWnhp | 3 | 2024-04-18 09:10:12.805706+00:00 | None | 1 | None | None | None | True | None | None | 2024-04-18 09:10:12.805868+00:00 |

| 4 | RJOudKgK3yAokOMo4Tbf | 4 | 2024-04-18 09:10:13.583563+00:00 | None | 1 | None | None | None | True | None | None | 2024-04-18 09:10:13.583655+00:00 |

| 5 | aYBpI3B1w3Z5tPGExLj1 | 5 | 2024-04-18 09:10:14.042620+00:00 | None | 1 | None | None | None | True | None | None | 2024-04-18 09:10:14.042710+00:00 |

| 6 | SWx4YnHEWw3WBpjfrOfq | 6 | 2024-04-18 09:10:15.705007+00:00 | None | 1 | None | None | None | True | None | None | 2024-04-18 09:10:15.705103+00:00 |

| 7 | 0U6vvjnfFmBmMg2r602J | 7 | 2024-04-18 09:10:16.991799+00:00 | None | 1 | None | None | None | True | None | None | 2024-04-18 09:10:16.991892+00:00 |

Storage

| uid | root | description | type | region | created_at | updated_at | created_by_id | |

|---|---|---|---|---|---|---|---|---|

| id | ||||||||

| 1 | flqsO3PN | /home/runner/work/lamin-usecases/lamin-usecase... | None | local | None | 2024-04-18 09:10:09.312522+00:00 | 2024-04-18 09:10:09.312541+00:00 | 1 |

Transform

| uid | name | key | version | description | type | latest_report_id | source_code_id | reference | reference_type | created_at | updated_at | created_by_id | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| id | |||||||||||||

| 7 | 1LCd8kco9lZU6K79 | Project flow | project-flow | 0 | None | notebook | None | None | None | None | 2024-04-18 09:10:16.986664+00:00 | 2024-04-18 09:10:16.986706+00:00 | 1 |

| 6 | 5TBciFk5ofchaIc0 | Perform single cell analysis, integrate with C... | None | None | None | notebook | None | None | None | None | 2024-04-18 09:10:15.700119+00:00 | 2024-04-18 09:10:15.700150+00:00 | 1 |

| 5 | c6u4TdrKGPtq6VUe | Postprocess Cell Ranger | None | 2.0 | None | pipeline | None | None | None | None | 2024-04-18 09:10:14.039224+00:00 | 2024-04-18 09:10:14.039244+00:00 | 1 |

| 4 | gnGZfJnBNoEzDHDr | Cell Ranger | None | 7.2.0 | None | pipeline | None | None | None | None | 2024-04-18 09:10:13.580309+00:00 | 2024-04-18 09:10:13.580329+00:00 | 1 |

| 3 | NIIKiTU4C6xElS88 | Chromium 10x upload | None | None | None | pipeline | None | None | None | None | 2024-04-18 09:10:12.802540+00:00 | 2024-04-18 09:10:12.802560+00:00 | 1 |

| 2 | HjPqSbaXb2nuwmIx | GWS CRIPSRa analysis | None | None | None | notebook | None | None | None | None | 2024-04-18 09:10:11.876789+00:00 | 2024-04-18 09:10:11.876808+00:00 | 1 |

| 1 | bl05Pd2Sv77D2Hei | Upload GWS CRISPRa result | None | None | None | app | None | None | None | None | 2024-04-18 09:10:10.862820+00:00 | 2024-04-18 09:10:10.862839+00:00 | 1 |

User

| uid | handle | name | created_at | updated_at | |

|---|---|---|---|---|---|

| id | |||||

| 2 | bKeW4T6E | testuser2 | Test User2 | 2024-04-18 09:10:11.869637+00:00 | 2024-04-18 09:10:13.572512+00:00 |

| 1 | DzTjkKse | testuser1 | Test User1 | 2024-04-18 09:10:09.309611+00:00 | 2024-04-18 09:10:12.794693+00:00 |

Show code cell content

!lamin login testuser1

!lamin delete --force mydata

!rm -r ./mydata

✅ logged in with email testuser1@lamin.ai (uid: DzTjkKse)

💡 deleting instance testuser1/mydata

❗ manually delete your stored data: /home/runner/work/lamin-usecases/lamin-usecases/docs/mydata